How to Create Chatbot For Website with LangChain and Groq

Chatbots are transforming the way users interact with websites and services, helping them navigate content and find answers quickly. Yet, traditional chatbots often fall short due to generic responses, slow replies, and a limited understanding of the site's content.

To overcome these limitations, a chatbot needs efficient content retrieval, workflow orchestration, and intelligent inference, which is exactly what LangChain and Groq provide. LangChain manages LLM workflows, handles document processing, and structures data retrieval, while 'Groq' provides low-latency inference, generating fast and accurate answers.

This guide explains how to build a Website Q&A Chatbot that understands a site and delivers real-time answers, guiding the process from content preparation to deployment.

Prerequisites

The following are required to build the Website Q&A Chatbot:

- Python 3.10+

- Familiarity with FastAPI and Streamlit

- API keys for Google Generative AI and Groq

- Access to the website content for scraping

- Python libraries:

1pip install pymupdf langchain langchain-core langchain-community langchain-text-splitters langchain-google-genai langchain-groq python-dotenv faiss-cpu fastapi uvicorn streamlit httpx

Project Setup

A clear project structure helps maintain organization and simplifies development. Here is the recommended layout:

1chatbot-project/2├── app/3│ ├── faiss_index/ # FAISS vector index files4│ ├── vectorstore_loader.py # Script to load vector store5│ ├── chatbot_api.py # FastAPI backend6│ ├── chatbot_ui.py # Streamlit frontend (optional)7├── data/8│ └── website_content.pdf # Cleaned website content9├── .env # API keys10├── website_content.html # Raw HTML content11├── requirements.txt # All dependencies12├── vectorstore_builder.py # Script to build FAISS vector store

The .env file should contain the API keys:

1GOOGLE_API_KEY=your_google_api_key2GROQ_API_KEY=your_groq_api_key

Steps to Create the Website Q&A Chatbot

Step 1: Creating the Vector Store

The first step is to convert website content into a searchable vector store that the chatbot can query. This involves scraping content, cleaning it, splitting it into chunks, generating embeddings, and storing them in FAISS.

1.1. Scrape Website Content

Download the website content as HTML:

1curl <https://www.website.com/> > website_content.html

Clean the content to remove headers, footers, scripts, or unnecessary elements. Convert the cleaned content into Markdown or PDF for processing. The final result is exported as a PDF and saved in the data/ folder.

1.2. Load Content into LangChain Documents

Parse the cleaned PDFs into LangChain Document objects, storing both text and metadata such as page number and file source, creating a structured format ready for splitting and embedding.

This functionality is implemented in vectorstore_builder.py using PyMuPDF (fitz), which efficiently extracts text from each PDF page.

1import fitz # PyMuPDF2from langchain_core.documents import Document3import os45def load_pdf_bytes(pdf_path: str) -> list[Document]:6 with open(pdf_path, "rb") as f:7 content = f.read()89 pdf_doc = fitz.open(stream=content, filetype="pdf")10 docs = []1112 for i in range(pdf_doc.page_count):13 text = pdf_doc[i].get_text()14 if text.strip():15 docs.append(Document(16 page_content=text,17 metadata={"source": os.path.basename(pdf_path), "page": i + 1}18 ))1920 pdf_doc.close()21 return docs

1.3. Split Text for Better Processing

Break each document into smaller, overlapping chunks to improve retrieval accuracy and maintain context across long documents. LangChain’s RecursiveCharacterTextSplitter can be used to break down the text into chunks of a specified size.

1from langchain_text_splitters import RecursiveCharacterTextSplitter23splitter = RecursiveCharacterTextSplitter(4 chunk_size=1000, # Maximum number of characters per chunk5 chunk_overlap=200 # Number of overlapping characters between chunks6)78chunks = splitter.split_documents(all_docs)

1.4. Generate Embeddings

After splitting the text into manageable chunks, convert each chunk into vector embeddings using Google Generative AI. These embeddings capture the semantic meaning of the text, enabling AI models to understand and retrieve relevant information efficiently.

1from langchain_google_genai import GoogleGenerativeAIEmbeddings2from dotenv import load_dotenv3import os45load_dotenv()67embeddings = GoogleGenerativeAIEmbeddings(8 model="models/embedding-001",9 google_api_key=os.getenv("GOOGLE_API_KEY")

1.5 Store Embeddings in a Vector Database

After generating embeddings, store them in a vector database for efficient retrieval. FAISS (Facebook AI Similarity Search) is used to store and query embeddings, enabling fast semantic search across large documents.

1from langchain_community.vectorstores import FAISS2import os34vectorstore = FAISS.from_documents(chunks, embeddings)56index_path = "app/faiss_index"7os.makedirs(os.path.dirname(index_path), exist_ok=True)8vectorstore.save_local(index_path) # Save the vector store locally910print(f"Vectorstore saved to '{index_path}/'")

1.6 Automating the Workflow

To make the entire process efficient, automate parsing multiple PDFs, splitting text, generating embeddings, and building the FAISS vector store. This ensures consistency and saves time when working with large document collections.

The process_folder function handles all these steps for a folder of PDFs:

1def process_folder(folder_path: str, index_path="app/faiss_index"):2 """Automate the process of parsing PDFs, splitting, embedding, and saving the vector store."""3 all_docs = []4 for filename in os.listdir(folder_path):5 if filename.lower().endswith(".pdf"): # Process only PDF files6 full_path = os.path.join(folder_path, filename)7 docs = load_pdf_bytes(full_path)8 all_docs.extend(docs)910 if not all_docs:11 raise ValueError(f"No PDF files found in '{folder_path}' or all PDFs are empty.")1213 chunks = splitter.split_documents(all_docs) # Split documents into chunks14 embeddings = generate_embeddings() # Generate embeddings15 vectorstore = build_and_save_vectorstore(chunks, embeddings, index_path) # Build and save the vectorstore16 return vectorstore1718## Main execution19if __name__ == "__main__":20 vectorstore = process_folder("data") # Run the process for the PDFs in the "data" folder

Step 2: Creating the Q/A Chatbot

With the vector store ready, let's build a chatbot by loading the FAISS index, creating a retrieval-based QA chain with Groq’s LLM, and setting up a FastAPI backend.

2.1 Load the Vector Store

Once the FAISS vector store has been built and saved, it can be loaded into memory for querying. This allows the FastAPI backend to access the vector store and perform queries using Groq’s models. The following code is used to load it from vectorstore_loader.py:

1from langchain_community.vectorstores import FAISS2from langchain_google_genai import GoogleGenerativeAIEmbeddings3from dotenv import load_dotenv4import os56load_dotenv()78embeddings = GoogleGenerativeAIEmbeddings(9 model="models/embedding-001",10 google_api_key=os.getenv("GOOGLE_API_KEY")11)1213def load_vectorstore(path="app/faiss_index"):14 return FAISS.load_local(path, embeddings, allow_dangerous_deserialization=True)

2.2 Create a Retrieval-Based QA Chain

Groq provides free access to LLMs with low-latency inference. This guide uses the llama-3.1-8b-instant model to create a retrieval-based QA chain that searches the FAISS vector store and generates answers. The following code is implemented in chatbot_api.py :

1from langchain.chains import RetrievalQA2from langchain_groq import ChatGroq3import os45def get_qa_chain(vectorstore):6 llm = ChatGroq(7 groq_api_key=os.getenv("GROQ_API_KEY"),8 model_name="llama-3.1-8b-instant"9 )1011 retriever = vectorstore.as_retriever(search_type="similarity", search_kwargs={"k": 3})1213 qa_chain = RetrievalQA.from_chain_type(14 llm=llm,15 retriever=retriever,16 )1718 return qa_chain

2.3 Set Up the FastAPI Backend

A FastAPI backend is created to serve the chatbot. Incoming POST requests with a question are processed by the Groq-powered QA chain, which returns the generated answer. The following code is placed in chatbot_api.py:

1from fastapi import FastAPI2from pydantic import BaseModel3from langchain.chains import RetrievalQA4from langchain_groq import ChatGroq5from dotenv import load_dotenv6from app.vectorstore_loader import load_vectorstore7import os89load_dotenv()1011app = FastAPI()1213def get_qa_chain(vectorstore):14 llm = ChatGroq(15 groq_api_key=os.getenv("GROQ_API_KEY"),16 model_name="llama-3.1-8b-instant"17 )1819 retriever = vectorstore.as_retriever(search_type="similarity", search_kwargs={"k": 3})2021 qa_chain = RetrievalQA.from_chain_type(22 llm=llm,23 retriever=retriever,24 )2526 return qa_chain2728vectorstore = load_vectorstore()29qa_chain = get_qa_chain(vectorstore)3031class Query(BaseModel):32 question: str3334@app.post("/chat")35async def chat(query: Query):36 result = qa_chain(query.question)3738 return {39 "answer": result["result"],40 }

Run the FastAPI server with:

1uvicorn app.chatbot_api:app --reload

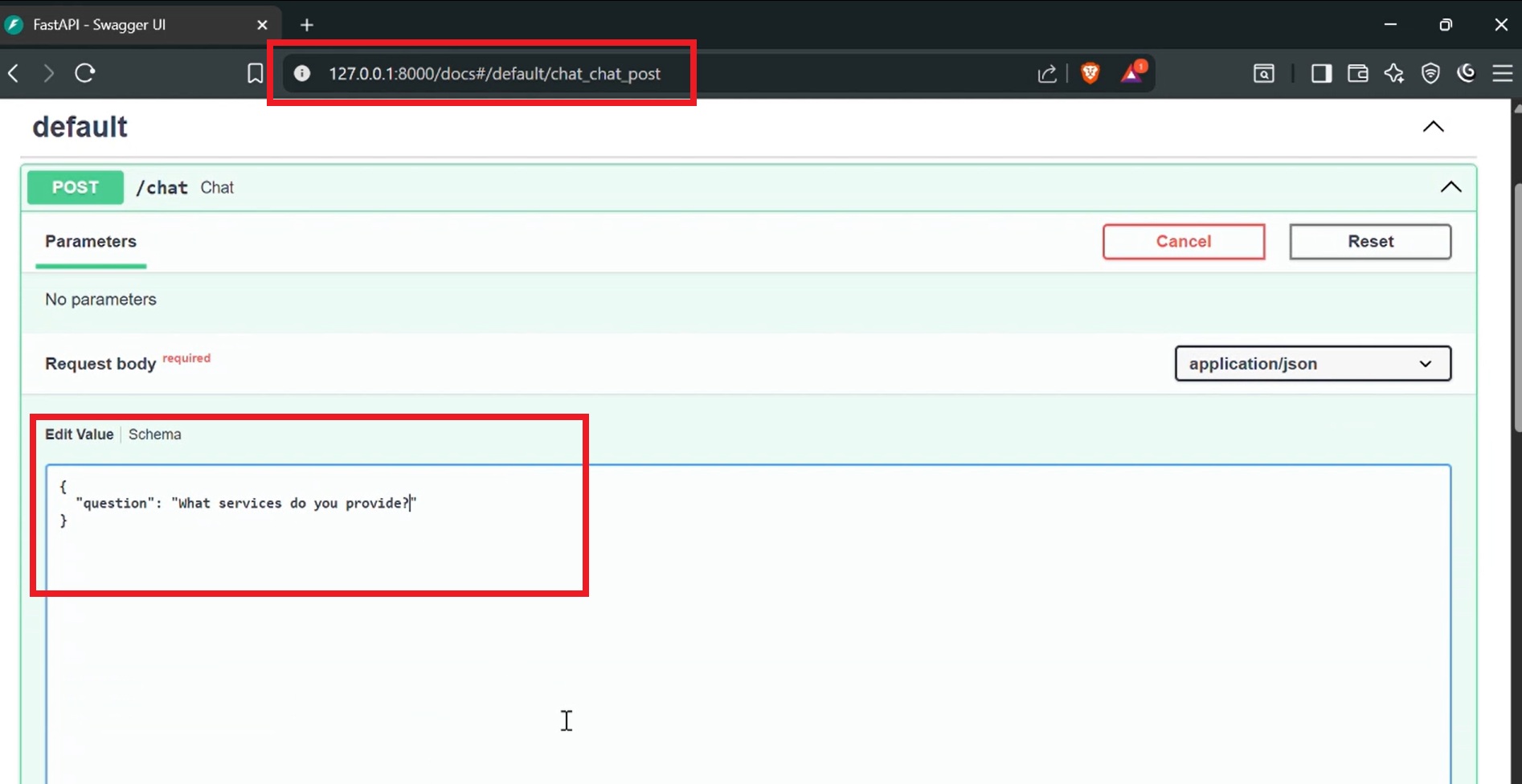

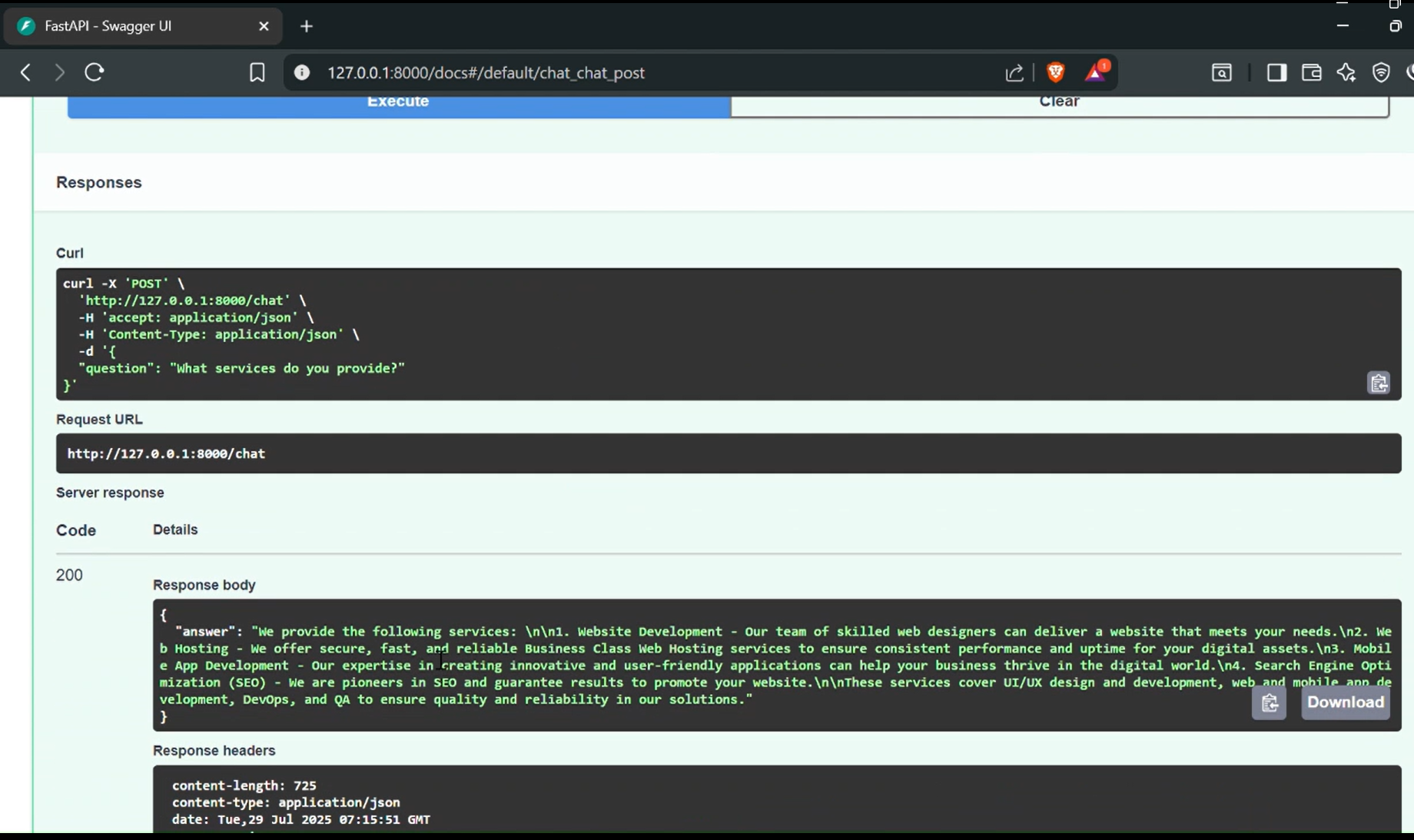

Once running:

- POST requests can be sent to http://localhost:8000/chat with the question in JSON format.

- The server responds with an answer generated by Groq’s model based on the indexed content.

- Swagger UI is available at http://localhost:8000/docs for interactive API testing and documentation.

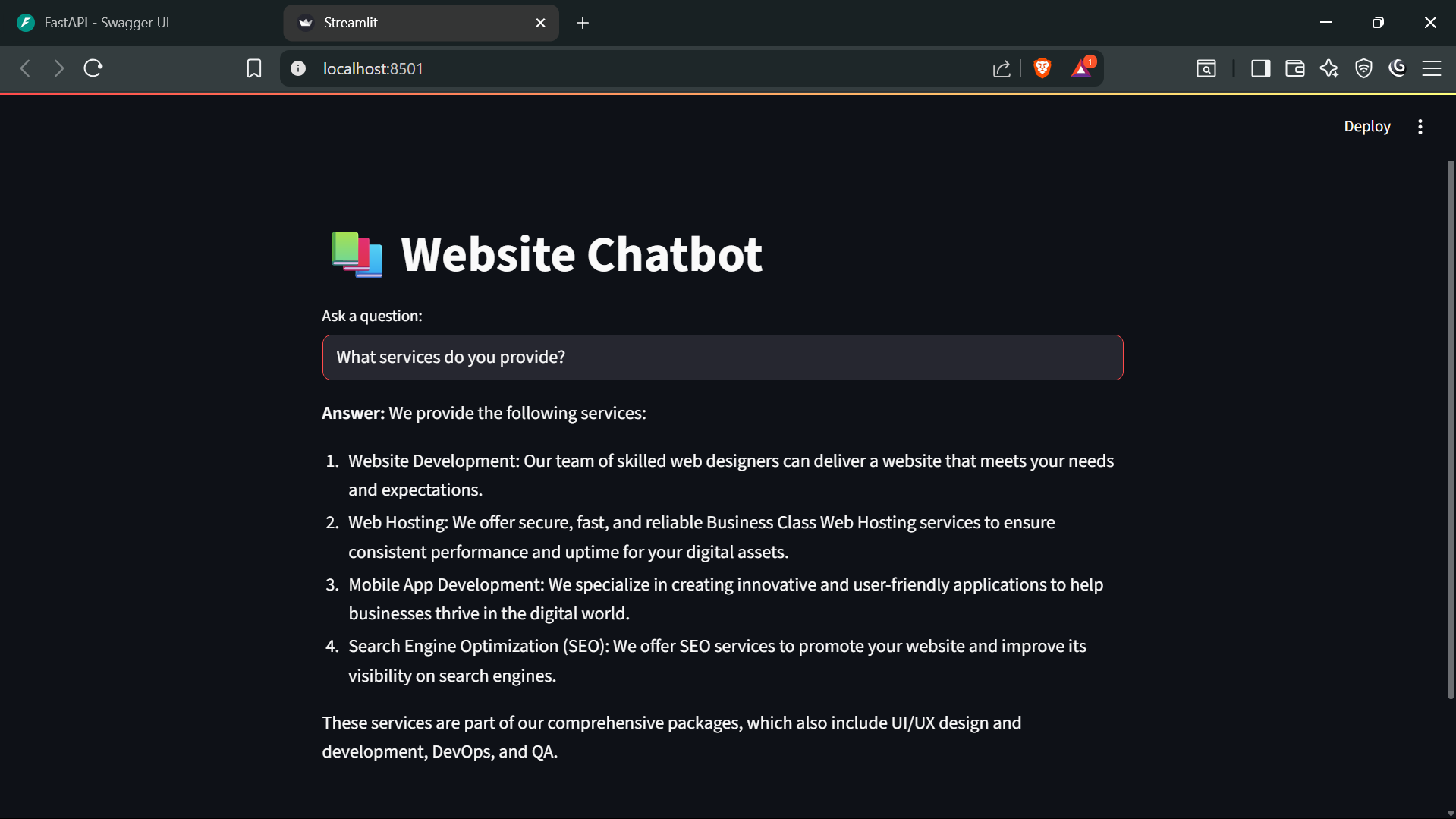

Step 3: Add a Streamlit Frontend (Optional)

For a user-friendly interface, a Streamlit app can be added on top of the FastAPI backend. This simple UI lets users type questions and instantly see answers generated by the chatbot.

1import streamlit as st2import requests34st.title("📚 Website Chatbot")56query = st.text_input("Ask a question:")78if query:9 with st.spinner("Thinking..."):10 response = requests.post("<http://localhost:8000/chat>", json={"question": query})11 data = response.json()1213 st.write("**Answer:**", data["answer"])

Run the backend first:

1uvicorn app.chatbot_api:app --reload

Then launch the frontend:

1streamlit run app/chatbot_ui.py

Once the UI is running, questions can be entered, and the system queries the FastAPI backend, which uses the Groq model to generate the responses.

Conclusion

The website's Q&A chatbot enables users to ask questions and receive answers directly from the site’s content. It combines a vector store, Google Generative AI embeddings, and Groq’s LLM to retrieve relevant information efficiently. With an optional Streamlit frontend, users can interact through a simple interface, showing a practical way to turn website content into an accessible, queryable system.

About Author

Tekbay Admin

Author

Tech enthusiast and writer sharing insights on software development, cloud technologies, and the future of digital innovation.